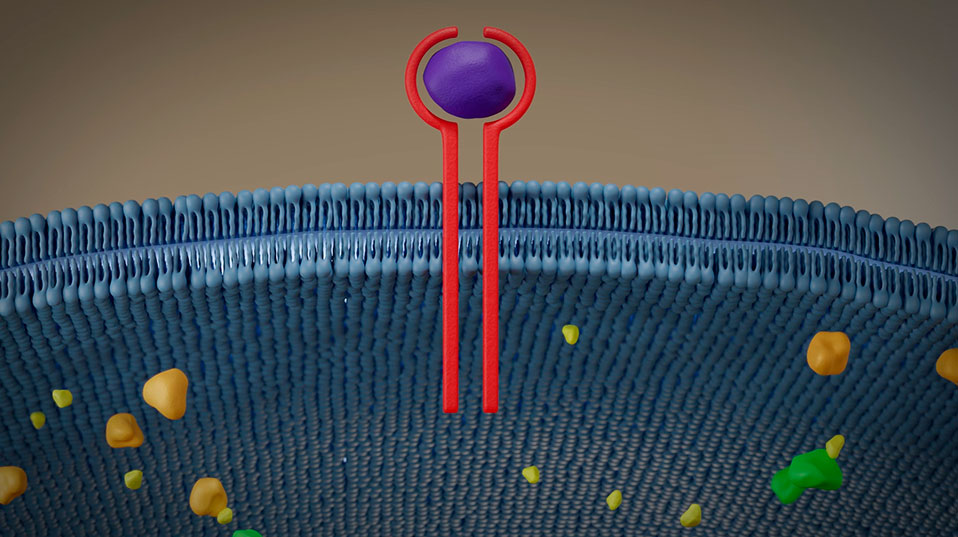

Background

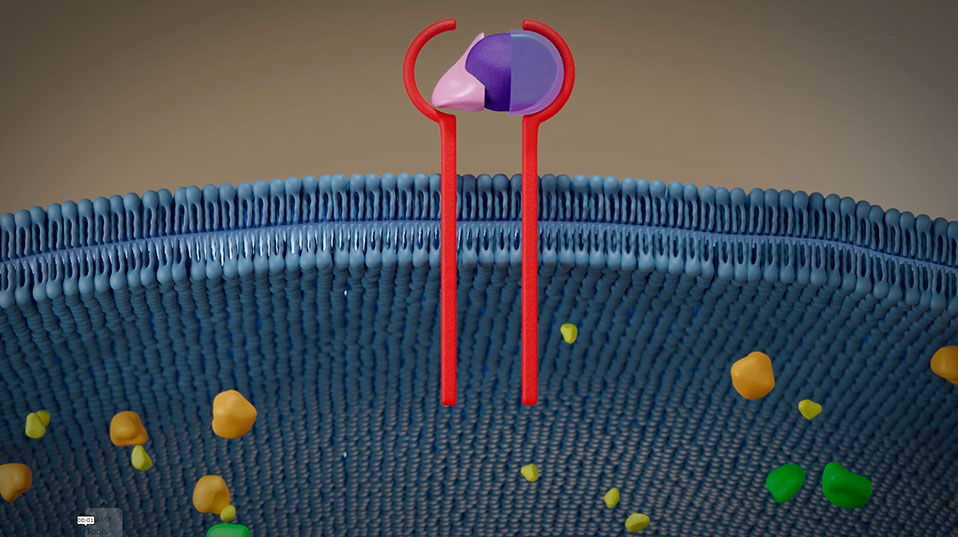

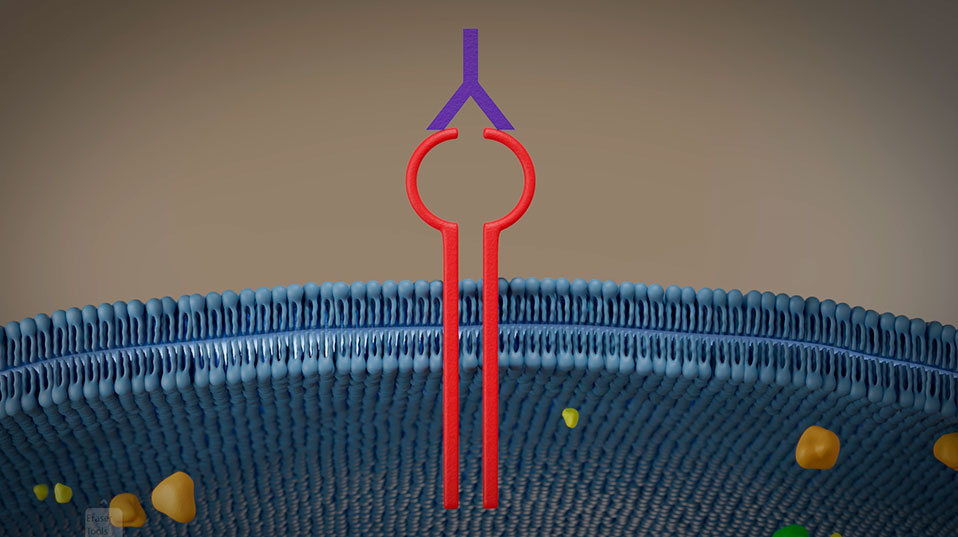

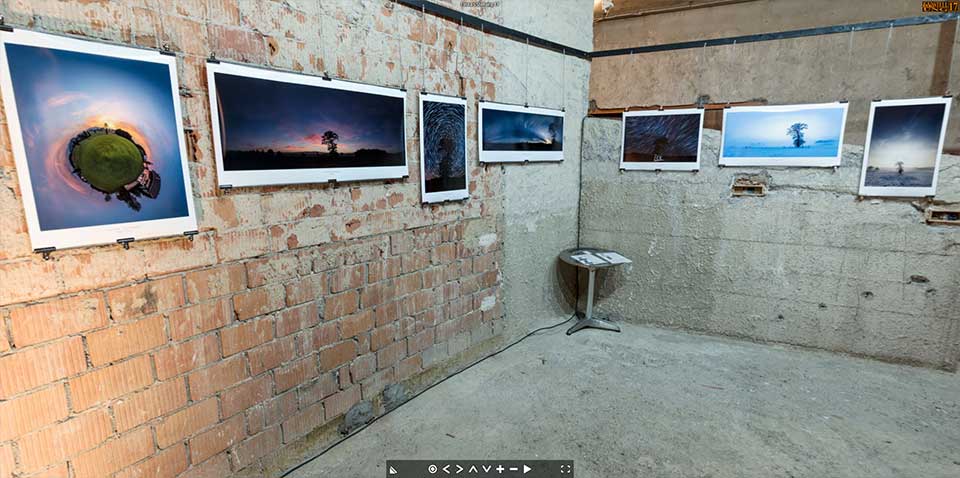

The introduction of 3D virtual-virtual galleries. The idea behind is to create online galleries, similar to virtual tours made from 360 photographs, but in full 3D.

Not only the presentation is virtual, but also the shown content is produced virtually in 3D. Hence «virtual-virtual». But a more clever name had to be put in place since «virtual-virtual virtual gallery» would not only be a tong breaker, but also lame.

Using the name «VirtueAlley™» to me symbolizes two components that fit well in my view.

Virtue stands for value, merit or worthiness. A possible description would be: «a quality that gives something special worth»

Alley stands for path, lane or route. Path to me symbolizes the path I am on and it also has this touch of a side alley with art shops in Paris.

Hence the term «VritueAlley™» was born.

Demo

Click here to see the interactive demo

And here is a video of it.

Technical Aspects

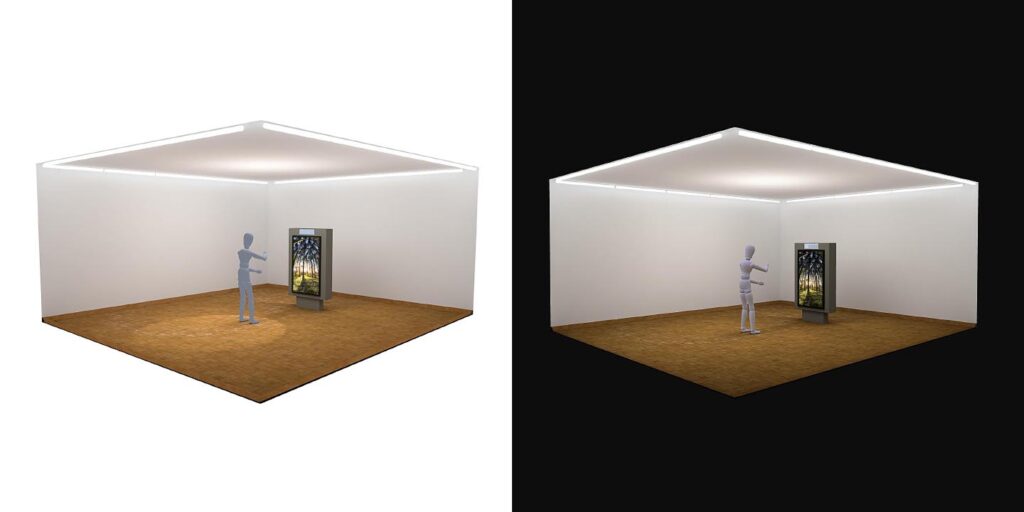

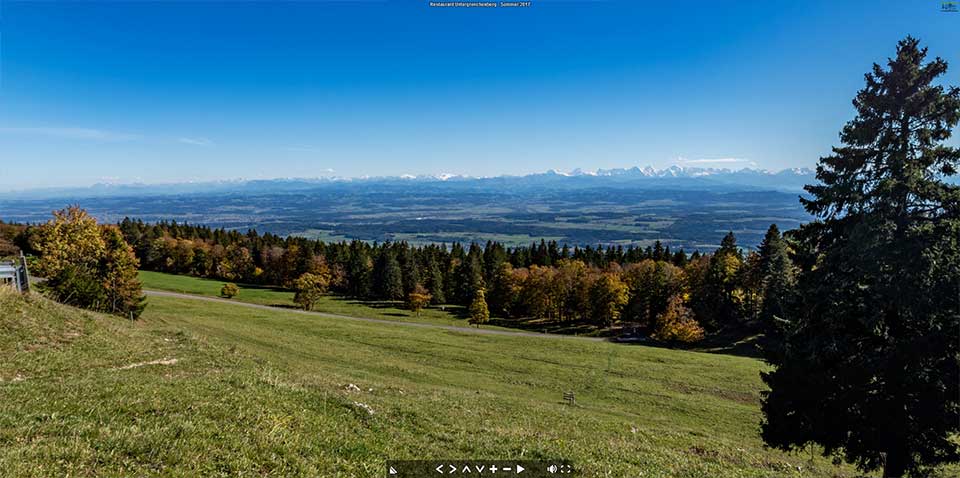

Creating 3D for the web was something I followed closely for the past 30 years. Always from a content creator point of view. Always on the lookout for inspiring ideas and concepts I saw this Lightroom plugin called Galeryst in one of the Adobe newsletters. It offers the possibility for photographers to create real 3D interactive galleries directly from Lightroom.

While this is definitely a very viable tool for photographers to present their work online, in a very integrated way, the visual appearance of Galeryst is debatable. I learned that the developer has focused on the technical side of this, especially the expandability of the procedurally developed galleries and it’s integration into industry standard tools and workflows.

Being an artist with very mediocre programming skills, I tackled this from the visual end. So I was very happy to discover the BabylonJS framework as described here.

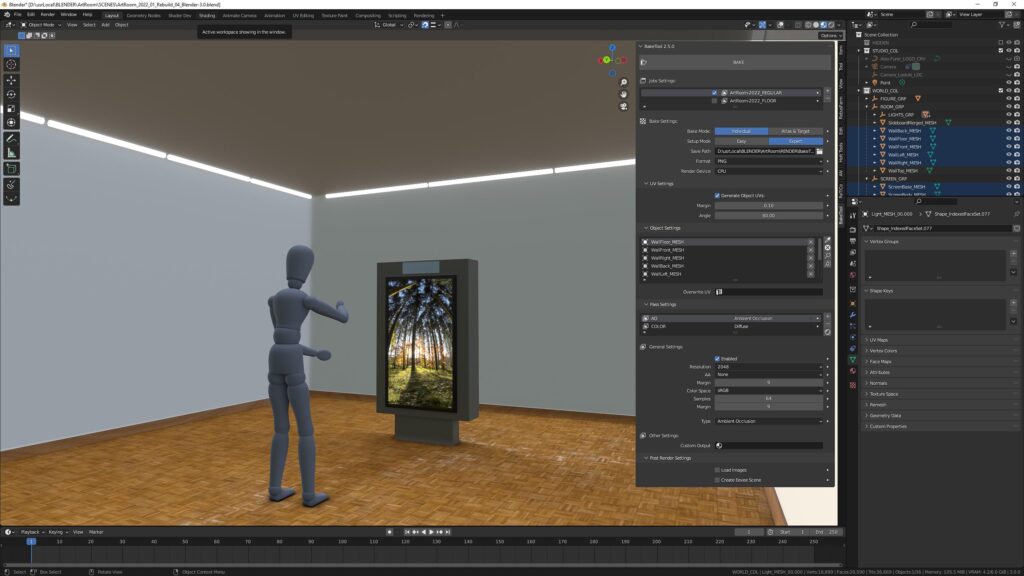

Since I had my ArtRoom already revamped and ready to go, it was a breeze to load this into a canvas inside a html page with the BabylonJS. The way I go about coding projects is always that I search for lines of code that do what I need and then fiddle around until it works within my project. Fortunately BabylonJS is hosting an online editor very large library of examples and has an excellent documentation.

You might are familiar with this feeling. «One is always more clever after the fact». No difference here. I always start somewhere, using certain tools I am aware off, and once a run through is complete on such a research project, new ways will have shown up. And most likely the path I take on the first run is more cumbersome than need be.

Next Steps / Vision

The sky is the limit, right? So yeah, here’s what’s on my mind

- Add capability for user to upload images to be hanged and shown in the modal (lightbox)

- Automatically scale the polygons to the aspect ratio of the uploaded image

- Add capability for the user to hang the images where desired

- Add capability to draw floorplan, including doors that connect to more rooms

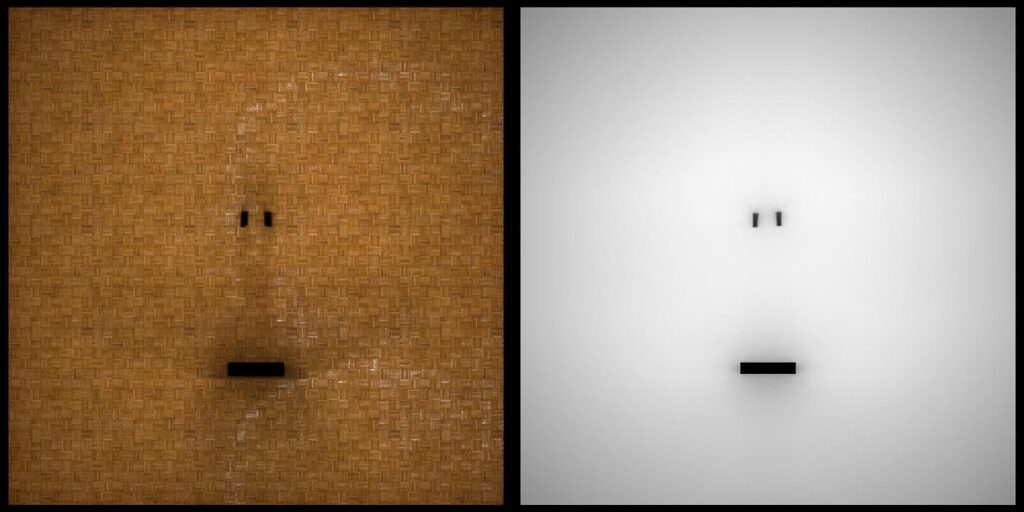

- Automatically bake the needed textures OR convert the demo to photorealistic rendering instead of baking

So, I need to put the big pants on and learn, learn learn (or find some poor soul that codes this up for me 🙂 )

- Get a real development environment up and running as described here

- Get the BabylonJS Editor up and running