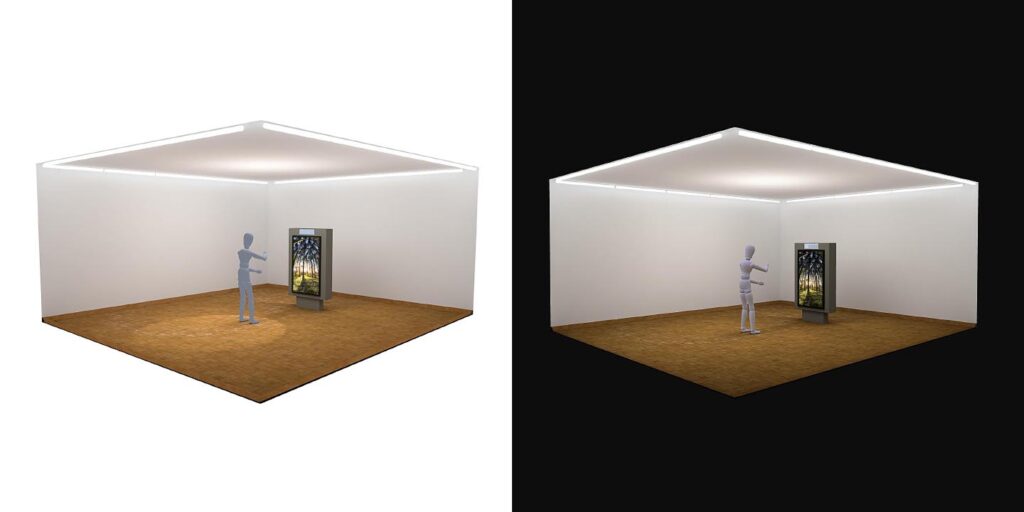

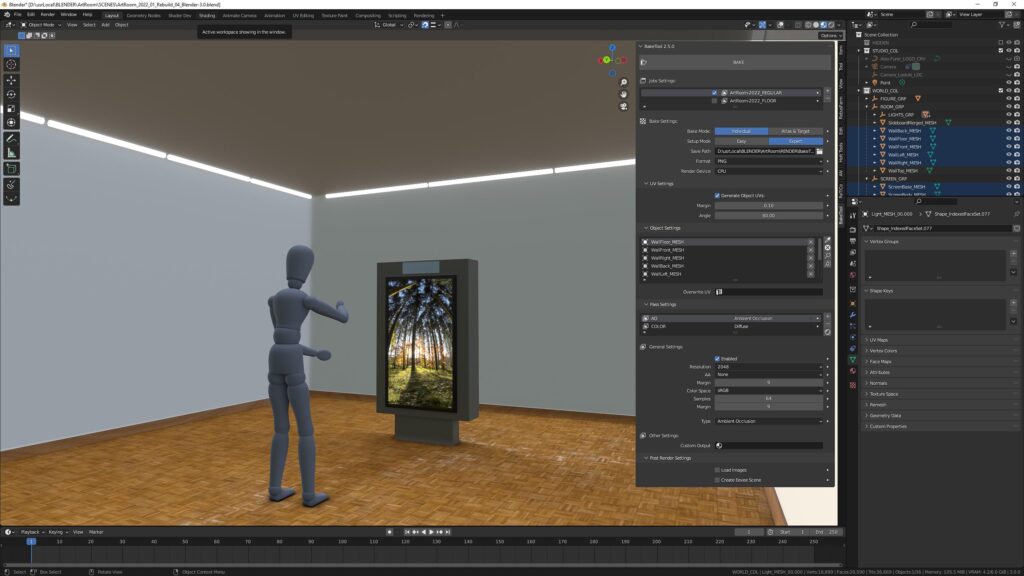

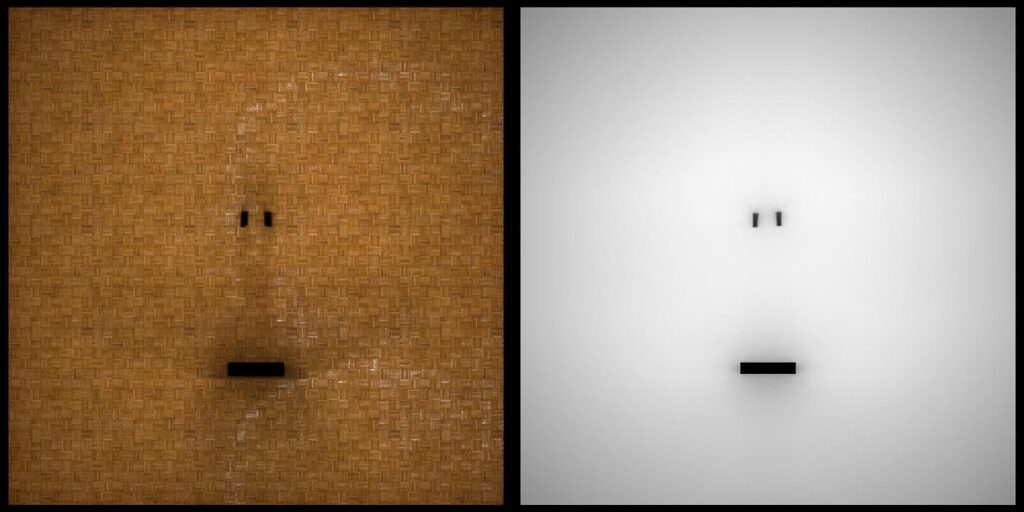

I reworked the ArtRoom, which I revived, and re-baked and exported it from Blender to BabylonJS, to the glTF (.glb) file format specifically from Blender.

I mainly added plates to put my photography on. I also tried to put a dedicated environment into the scene, but somehow the .babylon file is broken after exporting it. What I did is drag and drop the Blender export onto the BabyonJS sandbox and saved it as BabylonJS and glTF. But for some reason the environment did not come across.

But since I set all objects to «unlit», this doesn’t really matter that much.

Update April 16. This above is a heavily optimized version. A bit more moody, smaller textures and reduced figures polycount more manually. Basically the file went from 96 MB to 2 MB. Workflow seems stable.

I export from Blender using the built in glTF exporter, import that into the BabylonJS Sandbox, tweak a few things and export it from there. A very big help in all my work going to JPG is Greg Benz’s WebSharp PRO. Highly recommend adding this to your workflow as well!

Create your own environment (still unsolved)

So, technically, to create an environment go here.

Then:

- drag and drop a hdr image

- save the env

- drag and drop the saved .env scene onto a sandbox scene

But as said above, somehow the exported scene is broken. It cannot be re-imported to the BabylonJS sandbox…

Next Steps

- Next step would be to code it up and make the images clickable.